“No way he has written this!” Felt this way reading a LinkedIn post from a long-lost friend? He cannot write that well, you think. But that’s below the belt. Maybe he improved. A steep learning curve, but not impossible.

Yet something’s amiss. Then it dawns. The language, the idioms, the metaphors. All highly polished. All unmistakably American.

That’s simply not how we write. That voice, unmistakably Indian, is being quietly erased. Not because of censorship or cultural imperialism. The initial culprit was the ubiquitous autocomplete. The bigger culprit today is AI.

What replaces it

You recognise it immediately: the bullet point. The em dash. The subheading every three paragraphs. The paragraph that begins with “Here’s the thing:” or “Let me be clear:” or “The bottom line is this:”.

The setup before the point, gone. The proverb deployed mid-argument, gone. The long detour that hardly moves the story. Flagged as digression, corrected into a bullet.

That is the texture being lost.

The epistemic assembly line

The paper Epistemic Injustice in Generative AI (Jackie Kay, Atoosa Kasirzadeh, Shakir Mohamed) calls this the epistemic assembly line. The data that trained the dominant large language models is overwhelmingly American English. The content farms, the Reddit threads, the blog posts, the Stack Overflow answers, the Medium articles. When the model learns to write, it learns to write like that.

The paper argues that every stage of building an AI system, data collection, labelling, model training, deployment, is not a neutral technical exercise. It is a series of choices, each one embedding the cultural defaults of the people making them.

AI tools push content toward pattern, and SEO algorithms reward exactly that generic form. The two forces together create a feedback loop: generic content trains models that produce more generic content.

What gets lost

Indian English is not a corruption of British or American English. It is a distinct linguistic ecosystem, centuries in the making. When R.K. Narayan wrote, you felt Malgudi not merely as a place but as a grammar. When Raja Rao prefaced Kanthapura with his famous note about the tempo of Indian life, he was making an epistemological claim: that the way we tell a story is the story.

A study analysing over 740,000 hours of transcribed speech found that the word delve exploded in frequency after ChatGPT’s public release. People were unconsciously adopting the vocabulary of the AI they interacted with daily. We have reached a point where we should be worried about us imitating AI, rather than the opposite.

When the voice becomes the product

The AI logic demands that you write in ways that resemble what the model has already learned to recognise as “good writing.”

The paper uses the phrase hermeneutical ignorance: when the system cannot make sense of an experience because it has no framework for it. The model has not been trained on the Illustrated Weekly of India‘s best essays from the 1970s. It has not ingested the particular way a writer shaped by Telugu literary culture approaches an argument in English. It has not learned that certain kinds of indirection, what might be dismissed as “not getting to the point”, are rhetorical choices, rooted in a different speaker-audience relationship.

And so when an Indian writer uses these tools, the model corrects toward the only tradition it knows. American directness, informality and idiom.

Each pass of AI optimisation strips anything the model finds unusual. Anything genuinely local, individual, strange in the way that real voices are strange. The end draft is clean, professional, optimised. Also: stripped of voice.

What the paper gets right

The paper proposes epistemic justice as a design principle: asking, at every stage of the pipeline, whose ways of knowing are represented and whose have been written out. “Standard English” is not a neutral technical category. It is a cultural choice made by people in particular places. The losers are the writing traditions that never made it into the training data because they were not where the servers were.

When a system makes certain voices illegible, it removes them from the conversation that shapes how a society thinks about itself. The Indian English literary tradition is not an academic concern. It is a form of collective knowledge. One that can be flagged as an anomaly by systems that were not built to hear it.

A parting note

Nick Cave said the model fast-tracks the commodification of the human spirit. He was right. But the specificity matters — it is not just the human spirit being commodified. It is the located spirit.

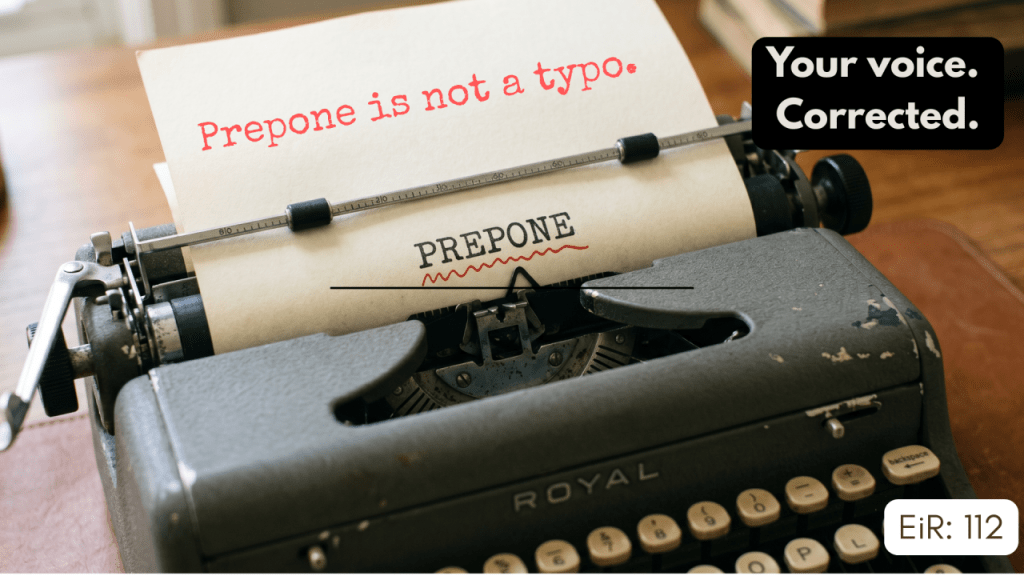

The spirit that belongs somewhere. It belongs when we liberally use “prepone”, a word that doesn’t exist in the dictionary. It belongs when people ask: “What’s your ‘good’ name?” instead of, “Whom do I have the privilege of meeting?”. It exists where literally every user of several languages I know leaves a house by saying, “I’ll be back” right at the doorstep, right when they’re actually leaving the place.

It belongs where many Telugu movies use ‘typical’ to imply non-typical things. When a character speaks of someone as a ‘typical’ fellow, they actually mean the person is an odd man out. It belongs when most Hindi movies used “by the by” when they meant “by the way”. It comes out when Mister is used as a pejorative for an arrogant person. It reveals itself through a convoluted form of respect while addressing someone as “sirjee”.

To AI, these are aberrations to be ironed out, oddities that need polish. But to an Indian, they provide a rich context and texture to the proceedings.

A machine that cannot tell the difference between the two will keep correcting us toward a version of ourselves that is cleaner, faster, and just a little less ours.

The machine has no coordinates. And no amount of productivity data changes that.

Leave a comment