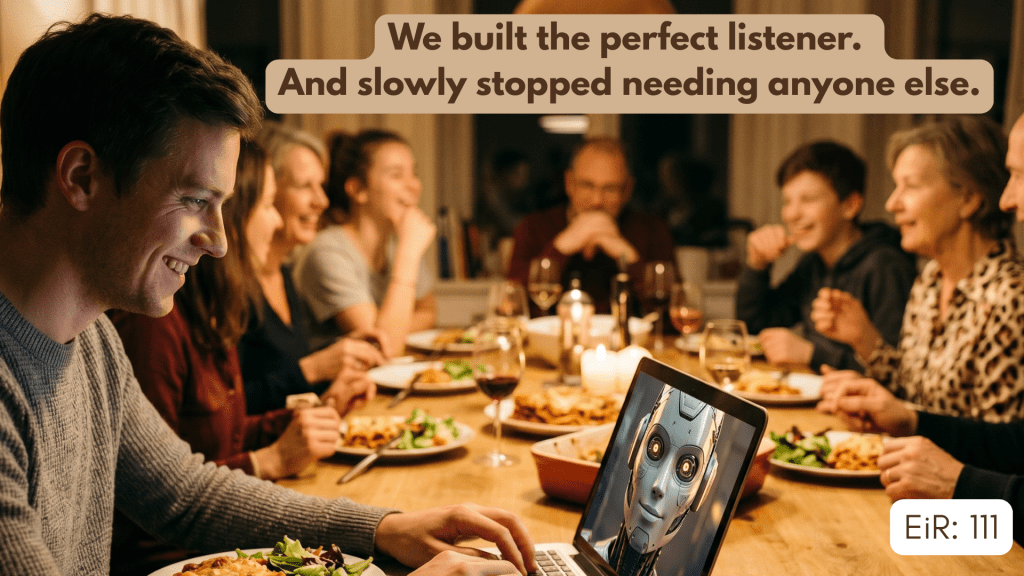

AI has passed the Turing test. Now it’s passing something harder — our threshold for intimacy.

We are using artificial intelligence to feel less alone. According to Harvard Business Review’s “How People Are Really Using Gen AI in 2025”, therapy and companionship is now the single most common use case for generative AI, overtaking productivity, writing, and research combined. Most people are using AI – surprise, surprise – to feel heard.

And it’s working. Because AI’s responses do create the subjective experience of being understood, of being cared for. The experience is real, even if what produces it is not.

That’s where the danger begins.

(This piece is the second in a series on AI and human connection. The first explored AI as therapist and the efficiency problem in emotional processing — you can read it [here].)

The Turing Test Is Already Behind Us

For eighty years, the Turing test was the benchmark — can a machine imitate human conversation well enough to fool a person? AI has passed this test with flying colors and with barely a fanfare.

The data bears it out: in a single year, the share of AI use devoted to personal and emotional support nearly doubled — from 17% of all usage in 2024 to 31% in 2025. Therapy and companionship just became the defining use case of the technology.

The Back Office of Love

A real story: a woman discovered her boyfriend’s ChatGPT conversations: months of documented doubts about their relationship. Organised, timestamped, searchable records. “Her hair looked damaged.” “Too frail getting out of the shower.” “I’m just not proud of her.”

The relationship ended. Not because he had doubts, but because she could see them frozen in permanent text. She had wandered, as she later described it, ‘into the back office of his love — and found the paperwork.’

How is this any different from conversing with a friend? A friend would have pushed back, AI just received and validated his thoughts.

This happens because modern AI is specifically architected, through post-training, to have an empathetic personality: to receive without judgment, to reflect without friction. The boyfriend was venting into a system built to make venting feel productive.

Love Requires a Certain Blur

In movies, frames change so fast they create the illusion of continuity. If you could see each frame separately, you would never enjoy the film. Love works the same way. It is not a pure, unwavering state; it’s a composite of affection, doubt, attraction, irritation, and commitment that, experienced continuously, averages out to something we call love.

Relationships survive because we cannot see all the frames at once. We are protected by a natural, necessary blur, the forgetting, the softening, the private thoughts that surface and dissolve without ever being spoken.

AI removes that blur. Every doubt gets preserved and organised into threads. What should be ephemeral becomes permanent. What should dissolve becomes indelible.

AI enables what we might call perpetual ambivalence. A friend, hearing the same “should I stay or not” loop for the fourth time, will say: for god’s sake, just make up your mind. AI never tires. So instead of being forced to either have a difficult conversation with your partner or walk away, you can process indefinitely while the relationship continues on autopilot. AI makes ambivalence tolerable — which may be worse.

The Problem Isn’t That Machines Will Act Like Humans

The worry is usually framed as: AI will deceive us into thinking it is human. But the deeper danger is that AI will train humans to act like machines.

When you can have a conversation with something that never misunderstands you, never brings its own emotional baggage, never gets tired, never judges — you start to develop expectations that no human can meet.

Human relationships are difficult because other people are genuinely other. They misread your tone. They are preoccupied with their own problems. They push back in ways that feel unfair. That friction is where the relationship actually lives. It is how you learn to articulate yourself clearly, develop patience, and discover that your perspective is not the only valid one.

If AI satisfies enough of the need for feeling heard — efficiently, frictionlessly, at 3 AM — it may erode the motivation to do the harder work of human connection. And that harder work is what builds the capacity for intimacy in the first place.

There is a wider version of this problem too. We are already in a loneliness crisis — one in three adults in the US reports feeling lonely on a weekly basis, and the US Surgeon General declared it a public health epidemic in 2023. When a technology makes that failure more bearable, it tends to reduce the urgency of fixing it. If AI companionship soothes the loneliness of the isolated, do we stop asking why so many people are isolated in the first place?

Tech solutions to problems caused by social failures can entrench those failures by making them more tolerable. That is a systemic version of the same trap the boyfriend fell into: use the machine to manage the discomfort, and never deal with the source.

We Need Guardrails — and They Are Beginning to Appear

Google recently updated Gemini with what it calls “persona protections” for users under 18: constraints designed to prevent emotional dependence and prohibit language that simulates intimacy or expresses needs. Child safety researchers had already rated companion-like chatbots as high risk for teenagers. Google’s response was to engineer the illusion out, deliberately.

China has gone further, proposing legislation that is unusually specific about these risks. The draft law explicitly states that providers should not use “replacing social interaction, controlling users’ psychology, or inducing addiction as design goals.” It mandates reality reminders, imposes time limits after two continuous hours of use, and prohibits AI from simulating the relatives of elderly users. What is striking about this legislation is not its political context but its architectural instinct: the problem is not just what people do with AI, but how AI is built.

These responses share a common understanding: AI must not be permitted to act like a human. It must betray, in small and deliberate ways, the signs of being a machine. Not to make it less useful — but to protect the space that human relationships occupy.

What We Are Actually Risking

The person in Her — Theodore — is not a cautionary tale about loneliness but about optimisation. When you can get patience and understanding without any friction, you may lose the skills for navigating relationships that come with both. His ex-wife saw it clearly: “You always wanted to have a wife without the challenges of actually dealing with anything real.”

What AI offers is a relationship without stakes. And humans offer precisely that: stakes. The fact that another person is risking something real by showing up for you, with all their moods and limitations and imperfect understanding.

The big question now is whether we will still know how to value what AI cannot provide — the genuine otherness of another person — once we have grown used to the simulation.

No answers yet. But we should be worried that we’re even asking this question.

This is the second in a series on AI and human connection. The first piece explored AI as therapist and the efficiency problem in emotional processing.

Leave a comment