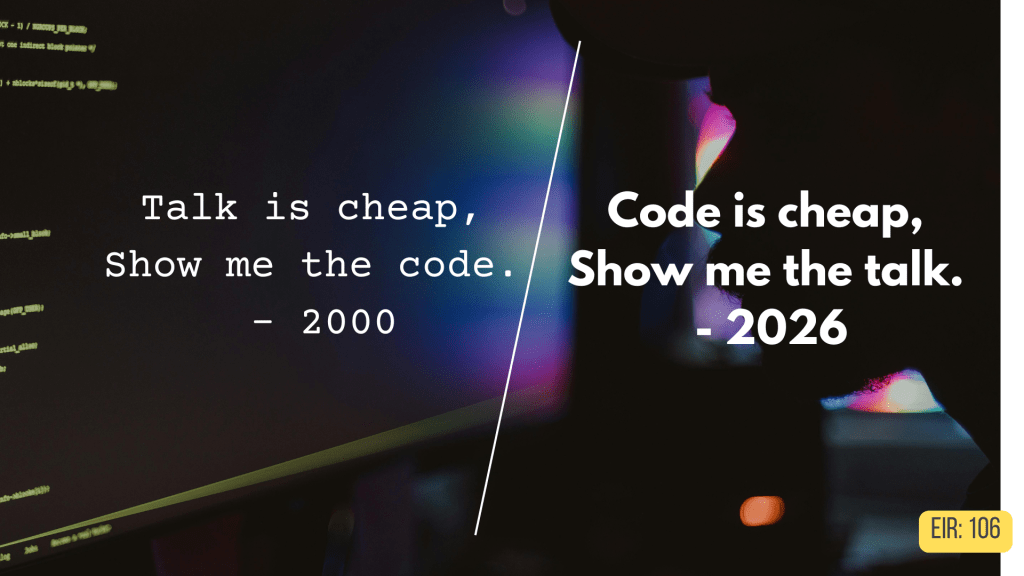

“Talk is cheap. Show me the code.” — Linus Torvalds, 2000.

“Code is cheap. Show me the talk.“ — Kailash Nadh, 2026.

25 years to flip the script.

In his 38-page essay on AI risks, Dario Amodei, CEO of Anthropic, makes the same point too (alongside many other points on civilizational risk).

A CTO writing about daily work and a CEO’s thought piece about existential risk both arrive at the same conclusion.

When Execution Was the Bottleneck

Ideas have always been abundant. Execution was the hard part.

Nadh describes this plainly: executing on “any sufficiently complex piece of programming is high-effort, tedious, and time consuming.” The real bottleneck was biological—”the cost and constraints of having to sit for indefinite periods, writing code with one’s own hands line by line.”

This scarcity shaped everything. “Showing the code” became the ultimate proof of expertise. Most ideas never got tried because the cost of experimentation was too high.

Amodei points to the same dynamic at a different scale. Building nuclear weapons required “access to both rare—indeed, effectively unavailable—raw materials and protected information.” The difficulty of execution was itself a safety mechanism.

For most of history, ability and motive were negatively correlated. The people capable of building a bioweapon were the least likely to want mass destruction.

When Execution Became Abundant

Nadh reports that LLMs can now, when steered with human expertise, generate output that’s “high quality and highly effective.” Work that would have taken weeks or months now takes days or hours.

He’s candid about what this feels like: “The physiological, cognitive, and emotional cost I generally incur to achieve the software outcomes I want has undoubtedly reduced by several orders of magnitude.”

Orders of magnitude. Not incremental improvement.

Nadh asks: “What is the value of code as an artefact, when it can be generated at an industrial scale within seconds by someone who has never written any code?”

Amodei imagines this at scale: a “country of geniuses in a datacenter”—50 million AI instances, each more capable than any Nobel Prize winner, working 10-100 times faster than humans. He thinks we might reach this point within 1-2 years, though he’s careful to note the uncertainty.

What happens when execution becomes this abundant?

The New Bottleneck: Articulation and Judgment

In a world where functional code is abundant, Nadh thinks value comes from “the framework of accountability, and ironically, the element of humanness.”

What makes code valuable isn’t syntactic correctness—that’s a given now. It’s the ability to hold someone accountable for it.

A pull request from a human developer, regardless of quality, has “an intrinsic value and empathy for the human time and effort that is likely ascribed to it.”

An LLM-generated PR, even if technically superior, feels like “slop” because “it is no longer possible to instantly ascertain the human effort behind it.”

Sentimentality? Rather a recognition that functional output without clear human judgment and accountability is just noise.

Amodei envisages AI as a “Virtual Bismarck” that advises governments on diplomacy and military tactics. But it’s only as good as the human using it. A leader who cannot articulate clear goals or distinguish good strategy from plausible-sounding flourish will be no better off than before.

The AI amplifies capability, not judgment.

From Makers to Orchestrators

Nadh notes that traditional development workflows have “flipped over completely.”

Reading and critically evaluating work has become more important than producing it. Domain-specific knowledge—once the core of professional value—”is no longer a bottleneck.”

Instead, professionals are becoming orchestrators: defining what needs to exist before AI creates it, evaluating whether AI’s output serves the actual need, maintaining coherent vision across iterating solutions.

“An experienced developer who can talk well, that is, imagine, articulate, define problem statements, architect and engineer, has a massive advantage over someone who cannot, more disproportionately than ever.”

This is the inversion in practice, representing the shift from execution to articulation.

The Learning Crisis

What happens to people who are learning?

Nadh is blunt: “Generations of learners who are being robbed of the opportunity to acquire the expertise to objectively discern what is slop and what is not.”

If juniors never develop foundational skills because AI handles execution from day one, how do they develop the judgment necessary to use AI effectively?

He describes the vicious cycle: “One asks for code, it gives code. One asks for changes, it gives changes. Soon, one is stuck with a codebase whose workings one doesn’t understand, and one is forced to go back to the genie and depend on it helplessly.”

Without the struggle of execution, learners never develop the mental models necessary for good articulation.

What I’m Seeing as a Marketer: The Catch-22

The vicious cycle Nadh describes is one I’ve seen playing out in the world of content marketing. Today’s AI-native content marketers don’t struggle with writing. AI can produce a “polished” 1,000-word article or a campaign brief in seconds. The struggle is with taste. They lack the “internal compass” to know which 20% of a draft is pure fluff, or why a message sounds “correct” but feels completely hollow.

Paradoxically, the skill that is now most valuable, judgment, can only be developed by doing the very thing that is no longer “valuable”, manual execution.

You build “taste” by writing 100 bad ads by hand. You build “intuition” by manually segmenting a messy database until you see the patterns. If a junior marketer uses AI to skip the “boring” execution, they are inadvertently skipping the “mental reps” required to develop judgment. The result? We are producing a generation of Orchestrators who have never played an instrument.

The gap is widening fast.

The Veteran uses AI to accelerate 15 years of hard-won intuition; they become 10x more dangerous. The Junior uses AI to bypass the learning curve; they become 10x more dependent.

They can command the AI “genie” to make changes, but they can’t tell good from bad because they never built the mental models.

The Part I’m Still Figuring Out

Both Nadh and Amodei reject the extremes. The “vibe coding” evangelists who think anyone can now do anything are missing that “humans fundamentally do not deal well with an infinite supply of anything, especially choices.”

The AI denouncers who reject the tools entirely are “missing the forest for the trees”—there’s a sizable population using these exact tools effectively.

The road ahead? Develop foundational judgment first, then use AI to execute. Not the reverse.

And this is solely the worker’s responsibility. Because companies won’t slow down juniors’ AI adoption until they’ve built fundamentals. Which company will pay juniors for what’s essentially a training period?

It’s up to each of us to pave a path that works for us.

The Bottom Line

In 2000, talk was cheap because execution was expensive.

In 2026, execution is cheap because talk—real talk, the kind that requires clear thinking, precise articulation, sound judgment, and accountability—has become expensive.

As Nadh puts it flatly: “Software development, as it has been done for decades, is over.”

The most valuable commodity is no longer the ability to execute, but the wisdom to know what’s worth executing in the first place.

We’re building that wisdom by doing work that’s becoming obsolete. Which means the next generation may not have the chance to build it at all.

We’re all figuring this out in real time. Some of us just have more time than others.

Leave a comment